Making music used to require a long chain of decisions before anything audible appeared. A melody had to be played, a beat had to be built, lyrics had to be matched to timing, and arrangement choices had to be made one by one. That is why an AI Music Generator feels less interesting as a novelty than as a change in workflow. Instead of beginning with software complexity, you begin with intent: a mood, a scene, a lyric draft, or a rough idea of how a song should move. In my view, that shift matters because it lowers the distance between imagination and a first listenable result. It does not remove judgment, taste, or revision, but it changes where the creative process starts.

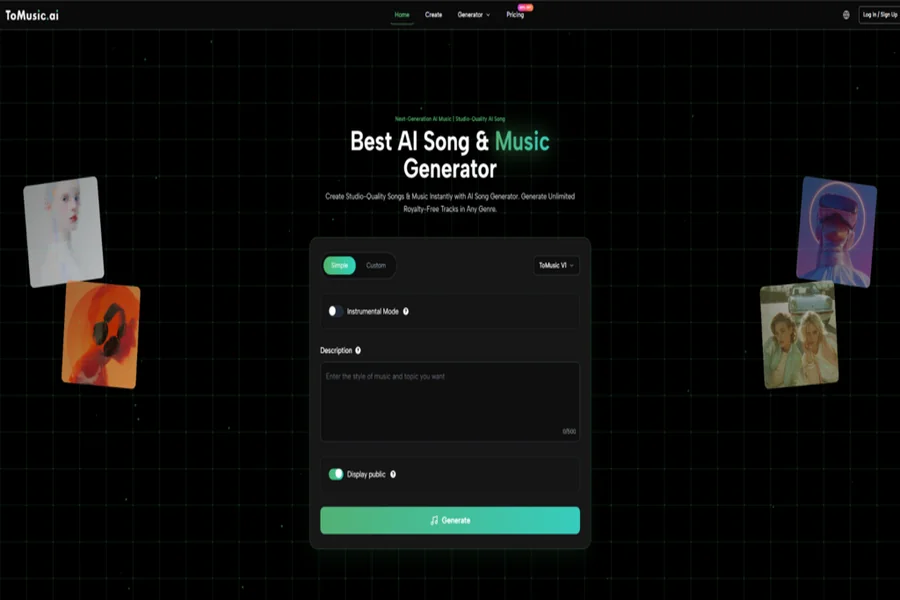

What makes that shift useful is not simply that music appears quickly. It is that the platform is built around different levels of control. On the official pages, ToMusic presents itself as a system that can turn text descriptions or custom lyrics into complete songs, while also letting users choose among multiple models with different strengths. That design suggests a practical idea: not every project needs the same balance of speed, vocal realism, harmonic density, or track length. For creators working under deadlines, that matters more than marketing language. A fast draft for social content and a more expressive vocal piece for a branded campaign are not the same task, so they should not have to rely on exactly the same generation path.

Why Text-Led Creation Changes The Starting Point

When people first hear about AI music, they often imagine automation replacing composition. I think the more useful interpretation is narrower and more realistic. Text-led generation changes the entry point. It lets people begin with language rather than with instruments, piano rolls, or DAW timelines.

Language Becomes A Practical Musical Brief

A text prompt can function like a compact creative brief. Instead of explaining your idea to a composer or trying to translate it into technical production terms immediately, you describe what you want in ordinary language: mood, tempo, style, emotional tone, instrumentation, or use case. That is especially useful for people who know what they want to feel but do not yet know how to build it manually.

Lyrics Become Structural Material

The platform does not stop at descriptive prompting. It also supports custom lyrics, which is a more significant capability than it first appears. Once lyrics are part of the input, the tool is no longer only generating background sound. It is trying to organize words into musical phrasing, vocal delivery, and song form. In practice, that makes the system more relevant to songwriters, marketers creating jingle-style pieces, and creators who want a complete vocal track instead of only an instrumental bed.

How The Official Workflow Actually Operates

Based on the official product and homepage descriptions, the core workflow is fairly straightforward. That simplicity is important, because good creative tools usually become more useful when the first action is obvious.

Step 1. Enter A Prompt Or Your Lyrics

The first step is to provide the source material. According to the official pages, this can be either a descriptive text prompt or custom lyrics. That means the platform supports both idea-first generation and lyric-first generation. For some users, this will be the biggest distinction: are you asking for music from a concept, or are you asking for music that carries words you already wrote?

Step 2. Choose The Model And Creative Settings

The second step is selection and control. ToMusic offers four models, labeled V1 through V4, and the platform description frames them as different creative engines rather than cosmetic variations. The official text also mentions controls such as genre, mood, tempo, instrumentation, custom length, style tags, and voice characteristics. That indicates the service is designed to sit somewhere between simple one-click generation and highly technical production software.

Step 3. Generate The Track

Once the input and parameters are set, the system generates the music. In the official explanation, the AI analyzes the description or lyrics and identifies key musical elements before producing a complete output. From a user perspective, this is the moment where idea translation becomes audible form.

Step 4. Review And Save The Result

The final part of the process is not overly dramatic, but it matters. The official homepage notes that creations can be saved in the platform library. That makes the tool more than a demo engine. It becomes part of an iterative workflow, where tracks are generated, compared, refined, and kept for later reuse or selection.

What The Four Models Suggest About Product Design

A lot of AI music products claim flexibility, but model variety is only meaningful when the differences reflect real creative needs. ToMusic’s official descriptions give each version a distinct role.

V4 Favors Stronger Vocal Expression

The platform describes V4 as the flagship option with the best vocals and fuller creative control. In practical terms, that suggests a stronger fit for projects where the human-like quality of singing matters more than raw speed.

V3 Emphasizes Rich Harmonic Movement

V3 is positioned around advanced harmonies and rhythmic ideas. For users making fuller arrangements or wanting a track that feels a bit less flat in structure, that distinction may be useful.

V2 Extends Duration And Tonal Depth

V2 is associated with longer compositions, up to eight minutes, and a broader tonal feel. That makes sense for cinematic, ambient, or extended-form work where the track needs room to unfold.

V1 Keeps The Process More Direct

V1 appears to be the more balanced and streamlined option, with shorter track length and simpler control expectations. For quick content production, that may actually be an advantage rather than a compromise.

A Clear Table For Understanding The Differences

| Aspect | What ToMusic Presents | Why It Matters In Practice |

| Input method | Text prompts or custom lyrics | Supports both concept-first and lyric-first workflows |

| Output type | Instrumental or vocal songs | Useful for creators who need more than background music |

| Model choice | V1, V2, V3, V4 | Lets users match the tool to the project instead of forcing one path |

| Length control | Includes custom length, with longer tracks on higher models | Helpful for ads, content intros, or more cinematic pieces |

| Style control | Genre, mood, tempo, instrumentation, tags, voice traits | Makes outputs easier to steer without technical production knowledge |

| Usage rights | Commercial and royalty-free positioning on official pages | Important for content teams and business use cases |

| Library workflow | Saved creations in the platform library | Encourages iteration rather than one-off generation |

Where This Feels Useful Beyond Hobby Experiments

The easiest mistake with AI music tools is to judge them only as entertainment. I think that misses the broader use case. The more interesting question is where structured generation saves time without flattening creative intent.

Short-Form Content Production

For social video, internal campaigns, explainers, or repeatable publishing workflows, speed matters. A platform that can generate music from a brief allows creators to test multiple emotional directions without beginning from silence every time.

Early-Stage Song Drafting

For lyric writers, melody can be the missing piece that blocks progress. A system built for Lyrics to Music AI can help move a page of words into something testable. That does not mean the first result is definitive, but it can make revision more concrete because there is now something to react to.

Creative Teams With Uneven Musical Skills

A marketer, founder, video editor, teacher, or solo creator may know the tone they need without knowing how to compose it manually. In that situation, the value is not perfection. It is access. The platform becomes a bridge between taste and output.

What Feels Promising And What Still Depends On Judgment

It would be easy to overstate what this kind of tool solves. In my testing of AI music systems generally, the first generation often works best as a direction rather than as a final master. ToMusic appears stronger when understood in that realistic frame.

What Looks Stronger

The multi-model structure is a meaningful strength because it acknowledges that music generation is not one uniform problem. The support for both custom lyrics and descriptive prompts also makes the platform more flexible than tools that handle only one input style.

What Still Needs User Guidance

Results will still depend on prompt clarity, lyric quality, and how well the chosen model matches the goal. A vague request usually leads to a vague outcome. Sometimes a second or third generation is necessary before the musical identity becomes convincing.

Why Restraint Improves Expectations

The most productive mindset is to treat the platform as a creative partner for drafting, variation, and acceleration. That is a strong role on its own. It does not need exaggerated claims to be useful.

Why This Matters For The Future Of Music Work

The deeper significance of tools like ToMusic is not that they make music effortless. It is that they reorganize effort. More time can go into choosing emotional direction, refining lyrics, comparing versions, and deciding what a track should communicate. Less time has to be spent getting from zero to first sound.

Creative Access Expands Faster Than Technical Access

Historically, musical ideas were often limited by equipment, training, or production software. Systems like this suggest a different pattern: more people can reach a meaningful draft stage, even if final polish still requires discernment.

The Real Advantage Is Faster Iteration

In many creative fields, the first version is not the hardest part because it is the best. It is the hardest because it gives you something concrete to improve. That is where text-to-music platforms may have their most durable value.

If that pattern continues, the most important outcome will not be the disappearance of traditional music creation. It will be the growth of a middle space where more people can sketch songs, test ideas, and move from language to sound with far less friction than before.